在前面介绍组件的时候说过DaemonSet,和swarm集群的global模式是一样的,每个Node最多只能运行一个副本。

DaemonSet的典型的应用场景

1、在集群的每个节点上运行存储Deamon,比如glusterd或ceph

2、在每个节点上运行日志收集Daemon,比如flunentd或logstash

3、在每个节点上运行监控Daemon,比如Prometheus Node Export或collectd

其实Kubernetes自己就在用DaemonSet运行系统组件

$ kubectl get daemonsets --namespace=kube-system

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

kube-flannel-ds-amd64 3 3 3 3 3 <none> 91m

kube-flannel-ds-arm 0 0 0 0 0 <none> 91m

kube-flannel-ds-arm64 0 0 0 0 0 <none> 91m

kube-flannel-ds-ppc64le 0 0 0 0 0 <none> 91m

kube-flannel-ds-s390x 0 0 0 0 0 <none> 91m

kube-proxy 3 3 3 3 3 kubernetes.io/os=linux 106mkube-flannel-ds和kube-proxy分别负责在每个节点上运行flannel和kube-proxy的组件。

查看kube-system命名空间内的pod,关于kube-flannel-ds和kube-proxy在每台节点上各有一个pod在运行

$ kubectl get pod --namespace=kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE

coredns-7ff77c879f-g5744 1/1 Running 0 108m 10.244.0.2 node1

coredns-7ff77c879f-z87zw 1/1 Running 0 108m 10.244.0.3 node1

etcd-node1 1/1 Running 0 108m 192.168.1.11 node1

kube-apiserver-node1 1/1 Running 0 108m 192.168.1.11 node1

kube-controller-manager-node1 1/1 Running 0 108m 192.168.1.11 node1

kube-flannel-ds-amd64-6x42s 1/1 Running 0 94m 192.168.1.12 node2

kube-flannel-ds-amd64-q2dpz 1/1 Running 0 94m 192.168.1.13 node3

kube-flannel-ds-amd64-tjvf7 1/1 Running 0 94m 192.168.1.11 node1

kube-proxy-6b99l 1/1 Running 0 94m 192.168.1.12 node2

kube-proxy-hhn9d 1/1 Running 0 108m 192.168.1.11 node1

kube-proxy-x6bzn 1/1 Running 0 94m 192.168.1.13 node3

kube-scheduler-node1 1/1 Running 0 108m 192.168.1.11 node1因为kube-flannel-ds和kube-proxy属于系统组件,所以在查看时需要添加--namespace,否则默认返回的是default命名空间中的Pod信息。

分析DeamonSet

通过对kube-flannel-ds和kube-proxy的分析来更好的了解DaemonSet

kube-flannel

在之前部署flannel网络的时候,在官方复制了一个很多内容的文件到本地的kube-flannle.yml文件中,用来创建网络,flannel的DaemonSet就在该文件中定义了。

将文件中关于DeamonSet的部分拿下来分析,简单解释了几个重要的组件

apiVersion: apps/v1

kind: DaemonSet # DaemonSet的语法和Deployment几乎一样,只有kind不一样

metadata:

name: kube-flannel-ds-amd64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

hostNetwork: true # 表示直接使用节点中的主机网络,相当于docker的host网络

containers: # 定义了flannel服务的容器

- name: kube-flannel

image: quay.io/coreos/flannel:v0.12.0-amd64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgrkube-proxy

由于无法拿到kube-proxy的yaml文件,所以运行命令来查看

为了便于理解,截取部分内容查看

$ kubectl edit daemonset kube-proxy --namespace=kube-system

apiVersion: apps/v1

kind: DaemonSet # 同样是指定的DaemonSet资源类型

metadata:

labels:

k8s-app: kube-proxy

name: kube-proxy

namespace: kube-system

spec:

selector:

matchLabels:

k8s-app: kube-proxy

template:

metadata:

creationTimestamp: null

labels:

k8s-app: kube-proxy

spec:

containers: # 定义kube-proxy的容器

- command:

- /usr/local/bin/kube-proxy

- --config=/var/lib/kube-proxy/config.conf

- --hostname-override=$(NODE_NAME)

env:

- name: NODE_NAME

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: spec.nodeName

image: registry.aliyuncs.com/google_containers/kube-proxy:v1.18.1

imagePullPolicy: IfNotPresent

name: kube-proxy

status: # 当前DaemonSet的运行时状态,这是kubectl edit查看到的配置特有的。

currentNumberScheduled: 3 # 当前调度的Pod数量为3

desiredNumberScheduled: 3

numberAvailable: 3

numberMisscheduled: 0

numberReady: 3

observedGeneration: 1

updatedNumberScheduled: 3Kubernetes集群中每个当前运行的资源都可以通过kubectl edit查看配置和运行状态,如kubectl edit deployment nginx

如果在生产环境中,忘了某个控制器资源(Deployment、DaemonSet、ReplicaSet…)是由哪个yml文件部署的,可以使用这种方式来编辑文件

部署自己的DaemonSet(Prometheus)

部署Prometheus Node Export

以Prometheus Node Export为例,演示运行DaemonSet

Prometheus 是流行的系统监控方案,Node Exporter 是 Prometheus 的 agent,以 Daemon 的形式运行在每个被监控节点上。

在集群每个节点中下载镜像,也可以不下载,那就会在接下来部署的时候慢一点

docker pull prom/node-exporter在Docker中部署Prometheus的export节点时的命令如下

docker run -d -v /proc:/host/proc -v /sys:/host/sys -v /:/rootfs \

--network host prom/node-exporter --path.procfs /host/proc \

--path.sysfs /host/sys --collector.filesystem.ignored-mount-points \

"^/(sys|proc|dev|host|etc)($|\)"要将以上命令转换为yaml文件

[root@node1 ~]# vim node_export.yml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter-daemonset

spec:

selector:

matchLabels:

name: prometheus

template:

metadata:

labels:

name: prometheus

spec:

hostNetwork: true # 使用host网络

containers:

- name: node-exporter # 容器名node-exporter

image: prom/node-exporter # 镜像名

imagePullPolicy: IfNotPresent # 镜像策略

command: # 容器启动后运行的命令

- /bin/node-exporter

- --path.procfs

- /host/proc

- --path.sysfs

- /host/sys

- --collector.filesystem.ignored-mount-points

- ^/(sys|proc|dev|host|etc)($|\)

volumeMounts: # 挂载容器内的目录

- name: proc # 与主机目录中name的映射目录要对应

mountPath: /host/proc

- name: sys

mountPath: /host/sys

- name: root

mountPath: /rootfs

volumes: # 映射主机的目录

- name: proc

hostPath:

path: /proc

- name: sys

hostPath:

path: /sys

- name: root

hostPath:

path: /运行模板文件

[root@node1 ~]# kubectl apply -f node_export.yml查看运行pod,status为RunContainerError,是因为找不到Prometheus的服务端

[root@node1 ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

node-exporter-daemonset-5gdcc 0/1 RunContainerError 6 6m6s 192.168.1.13 node3

node-exporter-daemonset-tfwmm 0/1 RunContainerError 6 6m6s 192.168.1.12 node2部署cAdvisor

根据docker run的启动cAdvisor来编写

docker run \

--volume /:/rootfs:ro \

--volume /var/run:/var/run:ro \

--volume /sys:/sys:ro \

--volume /var/lib/docker:/var/lib/docker:ro \

--publish 8080:8080 --detach=true --name cadvisor google/cadvisor编写运行cAdvisor的yaml文件

[root@node1 ~]# vim cadvisor.yml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: cadvisor

spec:

selector:

matchLabels:

jk: cAdvisor

template:

metadata:

labels:

jk: cAdvisor

spec:

hostNetwork: true

restartPolicy: Always # 不管什么问题总是重启

containers:

- name: cadvisor

image: google/cadvisor

imagePullPolicy: IfNotPresent # 镜像策略,如果本地没有则下载

ports:

- containerPort: 8080

volumeMounts:

- name: root

mountPath: /rootfs

- name: run

mountPath: /var/run

- name: sys

mountPath: /sys

- name: docker

mountPath: /var/lib/docker

volumes:

- name: root

hostPath:

path: /

- name: run

hostPath:

path: /var/run

- name: sys

hostPath:

path: /sys

- name: docker

hostPath:

path: /var/lib/docker运行cAdvisor文件

[root@node1 ~]# kubectl apply -f cadvisor.yml

daemonset.apps/cadvisor created查看创建的DaemonSet

[root@node1 ~]# kubectl get daemonsets cadvisor

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

cadvisor 2 2 2 2 2 <none> 82s查看DaemonSet创建的pod

[root@node1 ~]# kubectl get pod -o wide -l jk=cAdvisor

NAME READY STATUS RESTARTS AGE IP NODE

cadvisor-2lkwf 1/1 Running 0 4m7s 192.168.1.13 node3

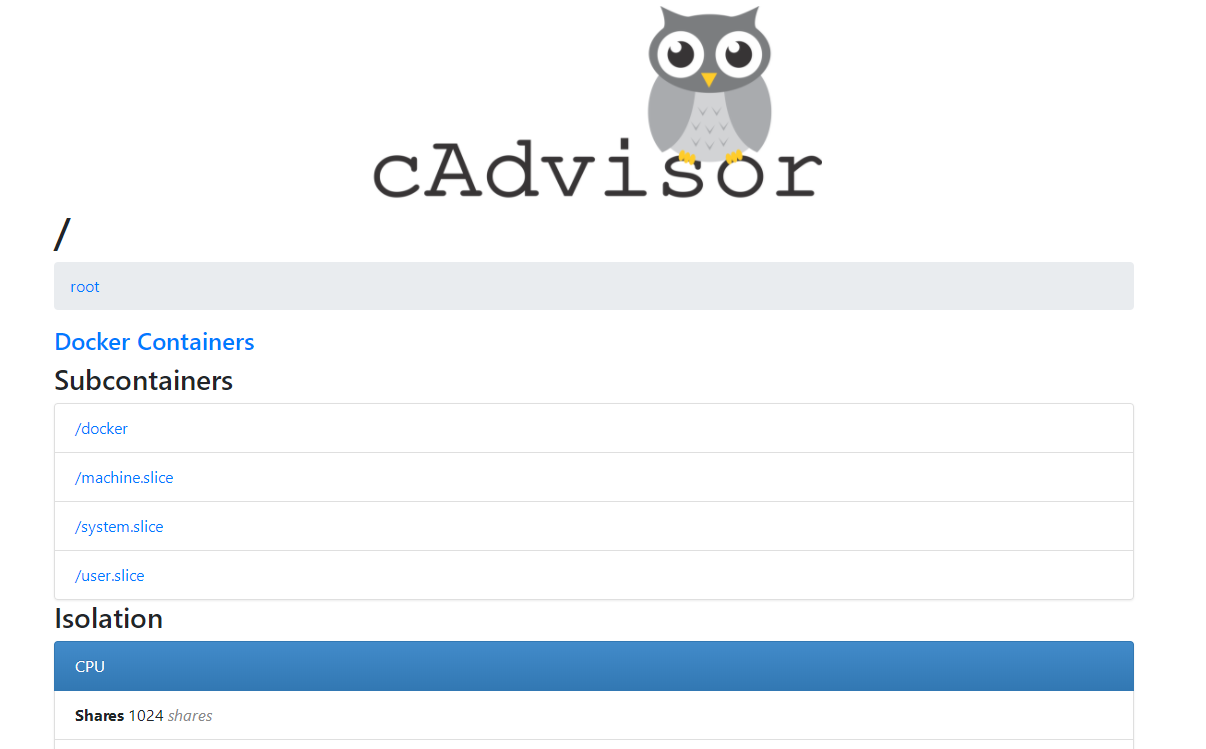

cadvisor-8s2xn 1/1 Running 0 4m7s 192.168.1.12 node2访问查看http://192.168.1.12:8080和http://192.168.1.13:8080

如图所示

之后会单独做一篇用来安装Prometheus监控DaemonSet文档